One Brain, Multiple Eyes

Pi is a single-session agent. It doesn’t care where the conversation comes from — terminal, Slack, wherever. One session, one context. That’s the whole point of keeping it minimal.

But what if you want the same brain answering you on Telegram while you’re commuting, on Slack while you’re working, and on iMessage when your laptop is closed?

That’s the problem OpenClaw solves. One agent, multiplexed across every channel you use.

The Multiplexer Problem

Pi is stateless between sessions. It reads a conversation file, generates a response, writes the updated file. The conversation could come from anywhere — a terminal, a Slack webhook, a Telegram bot.

But Pi doesn’t handle the routing. Something else has to receive that Telegram message, figure out which conversation it belongs to, load the right session file, run Pi, take the response, and send it back to Telegram. That’s not agent work — it’s plumbing.

OpenClaw is the plumbing.

Routing: Which Agent, Which Session

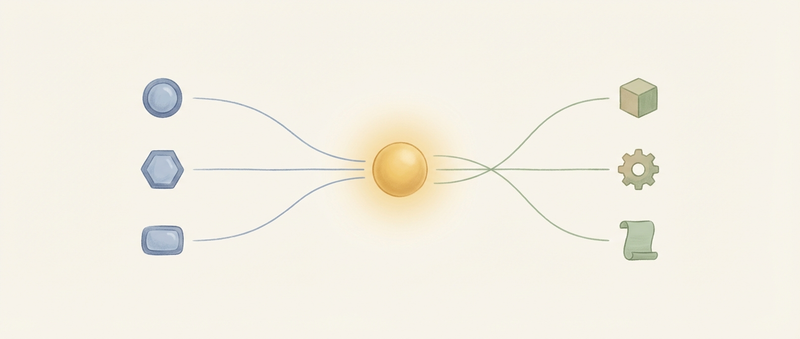

When a message arrives from any channel, OpenClaw makes two decisions: which agent handles it, and which session it continues.

The binding system handles the first question. You configure rules:

- Telegram DMs → personal agent

- Work Slack → work agent

- Discord server → coding agent

- Everything else → default agent

Bindings match on channel, peer (who’s talking), account (which bot account received it), guild (Discord server), or team (Slack workspace). Specific matches override general ones. A binding for a specific Telegram chat overrides the catch-all Telegram binding.

Threads inherit their parent’s binding. If you’re in a Slack channel bound to your work agent and someone starts a thread, that thread routes to the same agent — even though technically it has a different peer ID. The binding system checks the parent context first, then falls back to direct matching.

A concrete config:

bindings:

- match:

channel: telegram

peer:

kind: dm

id: "12345678" # Specific contact

agentId: personal

- match:

channel: slack

teamId: "T0123WORK"

agentId: work

- match:

channel: discord

guildId: "coding-server"

agentId: coding

The hierarchy is evaluated top to bottom. First match wins. The final implicit rule is “everything else → default agent.”

Session Isolation

Once OpenClaw knows which agent handles a message, it needs to know which session to continue. This determines what context the agent sees.

OpenClaw constructs session keys from agent ID, channel, and peer. The dmScope setting controls how conversations group together:

main— all DMs collapse into one session (unified context)per-peer— each contact gets their own session (isolated conversations)per-channel-peer— same person on Telegram vs WhatsApp gets separate sessions (platform-isolated)

The trade-off is context bleed vs context isolation. With main, your agent knows you mentioned a project to your coworker and can reference it when your boss asks — useful for a unified assistant, awkward if you wanted those conversations separate. With per-peer, what you discussed with one person stays in that context.

Neither is universally right. It depends on how you want your assistant to behave.

Multi-Agent

OpenClaw supports multiple agents in one gateway. Each agent has:

- Its own model configuration (Opus for complex work, Sonnet for routine tasks)

- Its own sandbox settings (Docker container, filesystem access)

- Its own skills directory

- Its own sessions

The work agent doesn’t see personal conversations. The coding agent’s filesystem access doesn’t extend to your documents. Isolation isn’t just convenience — it’s the architecture.

A practical setup might look like:

- Personal agent on Opus for open-ended conversation

- Work agent on Sonnet with company Slack integration

- Coding agent on Sonnet with Docker sandbox and workspace access

Each agent is a Pi instance with different configuration. OpenClaw routes traffic to the right one.

Provider Failover

Cloud providers fail. Rate limits hit. Bills exceed quotas. A production assistant can’t just stop working when Anthropic returns 429.

OpenClaw maintains auth profiles — ordered lists of providers with automatic rotation. When one fails, it marks that profile as in cooldown and tries the next. Your config might list:

- Anthropic Claude (primary)

- OpenAI GPT-4 (fallback)

- Local Ollama (emergency)

The failover is transparent. The agent doesn’t know which provider answered. The conversation continues on whatever’s available.

This enables cost optimization too. Route simple messages to the cheap model, complex ones to the expensive model. Use cloud providers for capability, local models for privacy. The routing layer makes these policies configurable.

Command Gating

Not everyone who can message your agent should be able to reset its memory or switch models. OpenClaw implements command gating — certain commands require approval.

Gated commands include:

/reset,/new— clear session state/compact— force context compaction/model— switch the underlying model/think— adjust reasoning level

Who can run these commands? You configure an allowlist. For personal use, that’s just your user IDs. For team deployments, it might be specific Slack users or Discord roles. Everyone else can talk to the agent but can’t touch its configuration.

Media Staging

When someone sends an image or file, it has to reach the agent somehow. OpenClaw stages media into the sandbox workspace before Pi runs.

An image sent via Telegram:

- OpenClaw downloads it from Telegram’s servers

- Writes it to a temporary path in the sandbox workspace

- Includes the path in the message context

- Pi can read the file using its normal

readtool

This keeps Pi’s tools minimal — it doesn’t need platform-specific media APIs. It just reads files. The infrastructure handles the translation from “Telegram photo” to “file at /workspace/tmp/image-123.jpg.”

What Happens to a Message

Trace a Telegram message through the system:

- Receive — Telegram bot API delivers the message to OpenClaw

- Route — Binding system matches channel + peer → agent

- Session — Session key constructed, session file located

- Stage — Attachments staged into sandbox workspace

- Gate — Slash commands checked against allowlist

- Directives — Inline directives extracted (

/think high,/model gpt-4) - Run — Pi executes with the session and message

- Chunk — Response split to fit platform limits (Slack: 40KB)

- Send — Response delivered back to Telegram

The agent — Pi — handles step 7. Everything else is infrastructure. The infrastructure exists so the agent can be simple.

The Gateway

All this runs in a single process: the gateway. It maintains channel connections, routes messages, manages sessions, handles hot-reload.

The gateway runs on your hardware. Sessions live on your disk. Credentials stay on your machine. When you turn it off, it’s off.

This is different from agent-as-a-service. With hosted agents, the provider holds your sessions, sees your conversations, decides when to update the model. With OpenClaw, you hold everything. The trade-off is operational overhead — you’re running infrastructure. The benefit is control.

Pi in Production

Pi’s bet is that minimal agents are better agents — less bloat, more focus, clearer failures.

OpenClaw doesn’t contradict that bet. It validates it. Pi stays minimal. The infrastructure stays separate. When you’re debugging why the agent gave a bad response, you’re looking at Pi. When you’re debugging why a message didn’t arrive, you’re looking at OpenClaw. The concerns don’t mix.

This is what happens when you take a minimal agent and ask “how do I use this everywhere?” The answer isn’t making the agent bigger. It’s building infrastructure around the agent.

One brain, multiple eyes. The brain stays simple. The eyes multiply.