The Art of Combining Opinions

BM25 finds exact word matches. Vector search finds semantic similarity. Each has blind spots the other covers.

The obvious next question: why not use both?

Modern search systems do. They run BM25 and vector search in parallel, then combine the results. But combining ranked lists is harder than it sounds. The technique that makes it work - Reciprocal Rank Fusion - is elegant enough to be worth understanding on its own.

The Problem With Scores

Let’s say you search for “authentication flow” and get these results:

BM25 Results:

- meeting-notes.md (score: 12.4)

- auth-design.md (score: 8.7)

- api-spec.md (score: 6.2)

Vector Results:

- auth-design.md (score: 0.89)

- login-flow.md (score: 0.84)

- meeting-notes.md (score: 0.71)

Which document is most relevant overall?

The naive approach is averaging scores. But that doesn’t work - BM25 scores and cosine similarity scores are completely different things. A BM25 score of 12.4 doesn’t mean the same thing as a vector score of 0.89. They’re not on the same scale, they’re not measuring the same thing, and adding them together is meaningless.

You could try normalizing - convert both to 0-1 ranges and then combine. But normalization is tricky. What range do you normalize to? How do you handle documents that only appear in one list? The choices are arbitrary and affect results in ways that are hard to predict.

Reciprocal Rank Fusion sidesteps all of this by ignoring scores entirely. It only looks at ranks.

Ranks, Not Scores

RRF’s insight: position in a ranked list tells you something, regardless of the scoring function that produced it.

If a document is ranked #1 by BM25, that means BM25 thinks it’s the most relevant. If the same document is ranked #2 by vector search, that means vector search thinks it’s nearly the most relevant. We don’t need to understand or compare the scores - the ranks carry the signal.

RRF computes a combined score based purely on positions:

RRF(document) = sum of 1/(k + rank) for each list

Where k is a constant (typically 60) and rank is the document’s position in each list.

Let’s work through the example:

meeting-notes.md:

- BM25 rank 1: 1/(60+1) = 0.0164

- Vector rank 3: 1/(60+3) = 0.0159

- RRF: 0.0323

auth-design.md:

- BM25 rank 2: 1/(60+2) = 0.0161

- Vector rank 1: 1/(60+1) = 0.0164

- RRF: 0.0325

api-spec.md:

- BM25 rank 3: 1/(60+3) = 0.0159

- Vector rank: not present = 0

- RRF: 0.0159

login-flow.md:

- BM25 rank: not present = 0

- Vector rank 2: 1/(60+2) = 0.0161

- RRF: 0.0161

Combined ranking:

- auth-design.md (0.0325)

- meeting-notes.md (0.0323)

- login-flow.md (0.0161)

- api-spec.md (0.0159)

auth-design.md wins because it ranked highly in both lists. meeting-notes.md ranked #1 in BM25 but only #3 in vectors, so it comes second. Documents appearing in only one list score lowest.

Why k=60?

The constant k controls how much rank position matters.

With a small k (say, 1):

- Rank 1: 1/(1+1) = 0.50

- Rank 2: 1/(1+2) = 0.33

- The gap between #1 and #2 is huge

With a large k (say, 60):

- Rank 1: 1/(60+1) = 0.0164

- Rank 2: 1/(60+2) = 0.0161

- The gap between #1 and #2 is small

A larger k means positions matter less relative to appearing in multiple lists. Being #1 in one list isn’t much better than being #2. But appearing in both lists, even at mediocre ranks, beats appearing at the top of only one.

The original RRF paper (2009) found k=60 worked well empirically across various datasets. It’s become the standard default. The intuition: it rewards consensus across methods while still giving some credit to top positions.

Query Expansion: Casting a Wider Net

RRF combines results from different search methods. But you can go further by generating variations of the query itself.

Instead of just searching for “authentication flow,” you use a small language model to generate alternative phrasings. Maybe “user login process” or “identity verification steps.”

Then you run four searches, not two:

- Original query → BM25

- Original query → vectors

- Expanded query → BM25

- Expanded query → vectors

All four result lists feed into RRF. The original query gets weighted higher to keep it dominant, but the expansion helps catch documents using different terminology.

This addresses a limitation I mentioned in the BM25 post: you might not remember the exact words used. If your notes say “login” but you search for “authentication,” the expansion might generate “login” and catch those documents.

Query expansion is essentially automated synonym matching, powered by a language model that understands how concepts can be rephrased.

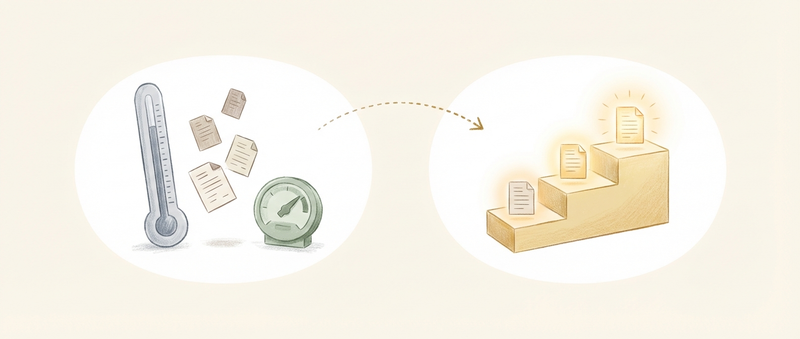

Why Hybrid Beats Pure

You might wonder: if vector search understands meaning, why bother with BM25 at all?

Because they have different failure modes.

BM25 fails when:

- You use different words than the document (“auth” vs “authentication”)

- You’re searching for concepts, not terms

- You can’t remember exact phrasing

Vector search fails when:

- You want exact matches (error messages, function names)

- The terms are rare or domain-specific

- The document is tangentially related but not actually relevant (high similarity, low relevance)

Consider searching for “ECONNREFUSED timeout.” BM25 will find documents containing that exact error string. Vector search might return documents about network errors generally - related, but not specifically about ECONNREFUSED.

Or consider searching for “how we handle user sessions.” Vector search will find documents about session management even if they never use the word “session.” BM25 would miss them if the terminology differs.

Hybrid search gets both. RRF ensures documents matching both methods rise to the top, while documents matching only one method still appear - just ranked lower.

Putting It Together

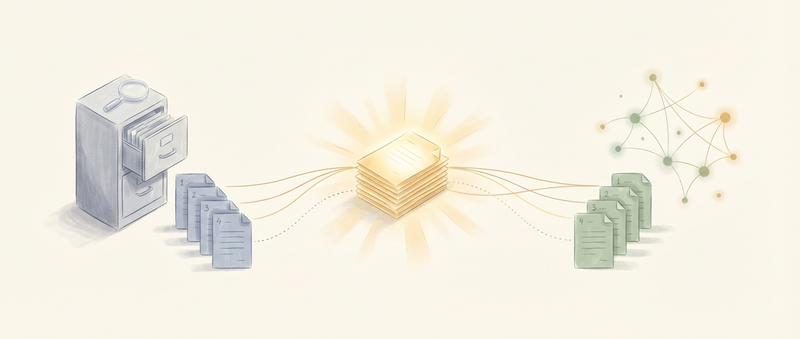

A hybrid retrieval pipeline looks like this:

- Query expansion: Generate variations of the original query

- Parallel retrieval: Run all queries against both BM25 and vector indexes

- RRF fusion: Combine all ranked lists into one

- Return results: Ranked by combined RRF score

The whole thing can run locally. The expansion model, the embedding model, the BM25 index - all on your machine. No API calls, no network latency, no data leaving your control.

For most searches, this is what you want. It’s slightly slower than pure BM25 (model inference takes time), but the results are substantially better for anything beyond exact-match queries.

RRF Beyond Search

RRF is useful anywhere you need to combine ranked lists from different sources. Election aggregation, recommendation systems, meta-analysis of studies. The principle is the same: ranks carry information even when scores don’t compare.

The elegance is in what RRF ignores. It doesn’t try to understand the scoring functions. It doesn’t normalize or calibrate. It just observes: this document ranked highly according to multiple independent methods. That’s a signal worth trusting.

It’s a reminder that sophisticated problems sometimes have simple solutions. Combining search rankings could involve complex machine learning, learned weighting schemes, elaborate normalization procedures. Or it could involve adding up reciprocals of positions. The simple approach often wins.

The Takeaway

BM25 and vector search answer different questions. BM25 asks “does this document contain these words?” Vector search asks “is this document about the same thing?” Hybrid search asks both.

RRF combines their answers without needing to understand or compare their scores. Documents that both methods like rise to the top. Documents that only one method likes still appear, just lower.

Query expansion catches terminology mismatches by searching for variations you didn’t think of.

Together, these techniques turn keyword search and semantic search from competing approaches into complementary ones. The result is search that handles both precise technical queries and vague conceptual ones - the best of both worlds.