Radix Trees Are Everywhere

I had no clue what a radix tree was until I started digging into SGLang, an inference server everyone claimed was “memory-efficient.” I ran it, read the docs, saw them throwing around “chunks” and “cache chunks” — nothing clicked.

Then I found mini-SGLang, the stripped-down version. And there, right in the code, was radix trees.

You know that moment when you learn something new and suddenly spot it everywhere? Like buying a specific car model and then seeing it on every street? That’s exactly what happened. Once I saw radix trees in SGLang, I started finding them in places that had absolutely nothing to do with LLMs. Turns out radix trees solve a specific class of problems so well that they keep showing up across decades and completely unrelated domains.

What Is a Radix Tree? (The Basics)

A radix tree (sometimes called a compressed trie or Patricia tree) is what you get when you take a trie and stop wasting space.

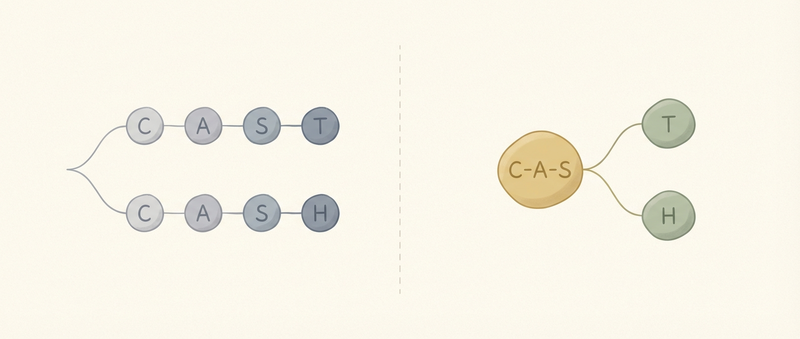

In a regular trie, each node represents one character. “CAST” and “CASH” share the C→A→S prefix, but still need separate nodes for T and H. A standard trie uses 5 nodes total. Still wasteful.

A radix tree compresses single-child chains. Those two words share “CAS”, so you get one node for “CAS” and two children for “T” and “H”. Three nodes instead of five.

What makes them useful:

- O(k) operations where k is key length (not O(n) where n is number of keys)

- Space compression — single-child edges get merged

- Built-in hierarchy — prefix relationships are just there

This isn’t academic. These are the data structures powering systems you use every day.

IP Routing: Where It All Started

The original use case: longest prefix match for packet forwarding.

Your routing table has entries like:

10.0.0.0/8→ interface A10.1.0.0/16→ interface B10.1.2.0/24→ interface C

When a packet arrives for 10.1.2.5, you need the most specific match. Hash tables can’t do this. You need a tree.

Network cards implement radix trees in hardware for line-rate performance. We’re talking about a data structure from 1960, published by Fredkin before the internet existed, now routing the internet’s traffic.

I never connected the dots before. I’ve configured routing tables, worked with network equipment, and had no idea what data structure was hiding underneath. That’s the thing about good abstractions: they work so well you forget they’re there.

Redis: Streams

But radix trees didn’t stay in networking. They migrated into places you’d never expect.

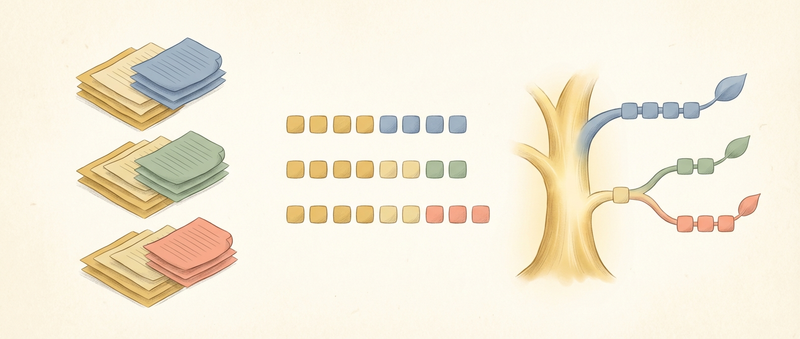

Redis uses radix trees to power Streams: its append-only log data structure introduced in Redis 5.0.

The problem Streams solve: you need an ordered, time-indexed log that supports range queries by ID and efficient consumer group tracking. Hash tables can’t give you ordered iteration. Skip lists (what sorted sets use) would work but waste memory for append-mostly workloads.

XRANGE mystream 1526985054069-0 1526985054079-0 # Give me entries in this ID range

antirez wrote Rax, a radix tree implementation, specifically for this. Stream entries are delta-compressed into listpack macro nodes, and those nodes are indexed by a radix tree keyed on entry IDs. Because IDs are time-based and stored in big-endian order, nearby entries share long prefixes. Exactly what radix trees compress well.

The results are dramatic. Storing one million entries in a Stream uses ~17 MB. The same data in a sorted set + hash takes ~220 MB. That’s 13x less memory, thanks to the radix tree plus listpack combination.

I’ve used Redis in production for years. Streams were just “another data type” to me. I never looked under the hood to see that the same data structure routing my packets was also indexing my event logs.

Ethereum: The Cryptographic Twist

Ethereum uses a Merkle Patricia Trie: a radix tree where every node is a cryptographic hash of its children.

This isn’t for storage. It’s for proofs.

When you want to verify that an account exists in Ethereum’s state, you don’t download the whole database. You download the path from root to leaf, and the hashes along the way. Each node’s hash is computed from its children, so if any data is tampered with, the root hash changes. The tree structure lets you prove membership without revealing everything. Light clients can verify state without running a full node.

The Ethereum yellow paper (section 4.3) describes this in detail. Every account, every contract storage slot, organized as a trie.

Same data structure that’s routing your packets and sorting your Redis keys, now securing billions in cryptocurrency.

SGLang: LLM Inference

Now we’re back to where I started.

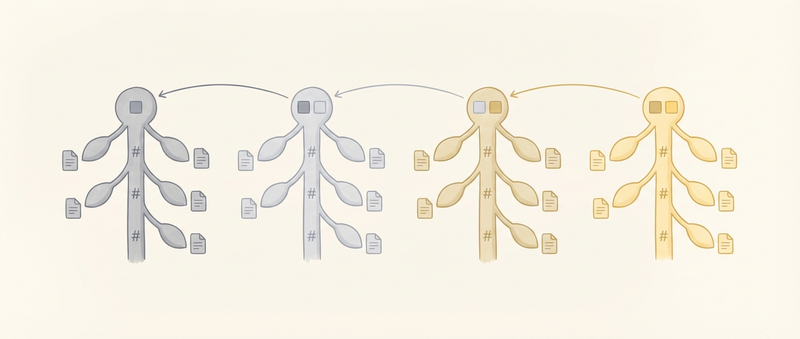

SGLang uses radix trees for KV cache prefix caching. Here’s the problem:

When you run LLM inference, the attention mechanism needs to keep track of all previous tokens (the KV cache). If you have 100 requests with the same system prompt, you’re computing the same KV cache 100 times. Wasteful.

SGLang stores token sequences as keys in a radix tree:

- Key:

[101, 203, 77, 55](the system prompt tokens) - Value: pointer to the GPU memory holding the KV cache

When request #42 arrives with the same system prompt, the tree finds the matching path. Zero recomputation. But here’s the kicker: radix trees also handle partial overlaps. If request #43 has [101, 203, 77, 55, 88] and request #44 has [101, 203, 77, 55, 99], the tree splits after the fourth token. Both share the first four tokens, but diverge after.

For long prompts, recomputing KV cache can dominate inference time. SGLang’s prefix caching eliminates redundant work across requests.

Hash tables can’t do this. Earlier approaches like vLLM’s block-level hashing (16-token blocks) require exact block alignment. SGLang’s token-level radix tree catches sharing opportunities that block hashing misses.

I saw this firsthand. Running SGLang with multiple concurrent requests, all sharing common prompts. The throughput jumped. GPU memory usage dropped. The radix tree was doing the heavy lifting. The RadixAttention paper has the benchmarks.

The Common Thread: Hierarchical Prefix Matching

So what do IP routing, Redis Streams, Ethereum state, and LLM inference have in common?

- Hierarchical data (IP prefixes, time-based entry IDs, account states, token sequences)

- Variable-length prefixes (not fixed blocks)

- Need for prefix operations (longest match, range queries, membership proofs, cache sharing)

Radix trees solve all of these naturally. The structure is the solution.

This is why the pattern keeps winning. Engineers aren’t lazy and keep copying code. Radix trees are just the right tool for this specific class of problems. When you have hierarchical data with shared prefixes, you’ll find yourself reaching for a radix tree.

The Takeaway

Don’t take away “learn radix trees.” Take away learn to recognize patterns.

I didn’t connect SGLang to IP routing. I didn’t see the connection between Redis Streams and Ethereum state. These were separate problems in my head until I learned the underlying data structure.

Pattern matching is one of the best skills you can develop in software engineering.

Bloom filters show up in distributed databases, web crawlers, and blockchain light clients. B-trees power databases, filesystems, and even Git. Skip lists are in Redis, LevelDB, and concurrency libraries.

When you see the same pattern in unrelated systems, you’re not seeing coincidence. You’re seeing how certain solutions just fit certain problems.

I was reading about “cache chunks” in SGLang docs, completely lost. Then I found what was under the hood. That’s the thing about patterns — once you see them, you can’t unsee them. Next time you’re evaluating an inference server and someone mentions “prefix caching,” you’ll know to ask what data structure they’re using. Next time you’re designing a system with hierarchical data and shared prefixes, a radix tree might be waiting for you.

This is what happened to me with routing tables and Redis. I used them for years without understanding what made them tick. Now I see the pattern everywhere. The data structure you just learned, powering half the systems you use — that’s not a bug. It’s just good engineering.